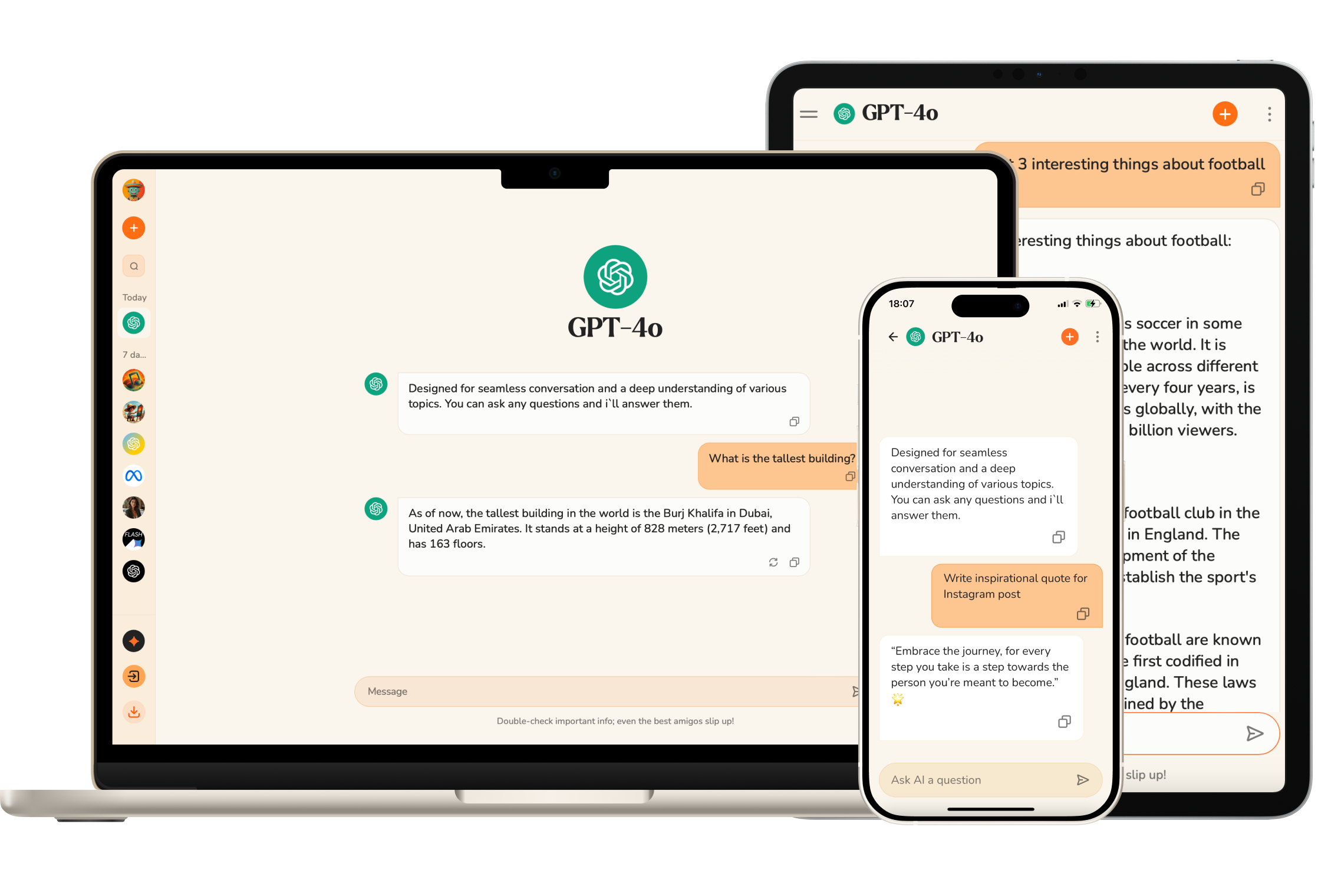

GPT-o1 and GPT-4o

Fastest content generation with Flux

Pick assistant and instruct on how to behave

Ask questions about PDFs, Word documents, Powerpoint, and more

Chatbot can check real-time information and events

Upload images and chat based on the contents of the image

Upload CSV files to filter, visualize, and analyze your data

Its implications extend far beyond the immediate technological advancements it brings. As we move forward, the continued exploration, adaptation, and ethical use of Llama 3 and similar models will be crucial in shaping the future landscape of AI.

In conclusion, Llama 3 stands as a beacon of advancement in artificial intelligence, emblematic of the potential for technology to drive significant progress when developed with foresight, responsibility, and an ethos of open collaboration. It is a testament to the power of collective intelligence and innovation, marking a significant step forward in our journey toward a more interconnected and intelligent world.